“Most women who claim they were raped were rapebaiting. If they just let ugly men have sex once in a while, the world would be better for everyone. It’s just sex, they need to get over it”

This was a post from the now banned Subreddit /r/Incels. A community of men who claim to be “involuntarily celibate” because women are evil. One of many, many online communities that revolve around hatred and scapegoating. How is the internet affecting our society and making parallel communities toxic, and what can be done to stop the hatred from festering?

Redditland: population 234 million

A few years ago, I wrote about Reddit’s revolt against the change in management.

I complained about the management’s new direction of banning subreddits, which are microcommunities on one of the biggest online forums. I’ve always been amazed by Reddit’s political structure and it’s reflection of a real society, and I was fascinated how the citizens of reddit tried to reclaim their virtual streets by causing a blackout.

I’m starting to soften on the policy the management took on banning toxic subreddits.

I might even say: I was wrong.

I was once saddened how the decision to ban meant that Reddit isn’t allowed to reflect a real society, including all the rotten apples and fucked up people in it. I think the disappointement came from a dark corner in me that grew up lurking on 4chan. I still can’t put my finger on what amuses me to see how people can be so fucked-up, but it did.

I don’t think lurking in the cesspool of the internet ever affected me or my political views since I’m definitely a Libtard. The only remnant is maybe my repertoire of Dead Baby jokes (always a risky performance for a new crowd).

However I sometimes forget that people can be susceptible to, and when exposed enough, convinced of toxic ideas.

I’m seeing it happen more around me now, and am therefore no longer sad with Reddit’s decision to ban toxic communities online.

Incels, The_Donald and OneTrueGod

What finally pushed me to change my opinion was the recent ban Reddit put on /r/incels. As shown in the beginning, Incels believe they aren’t getting any sex because they are ugly and all women are superficial and basically “Nazi’s trying to exterminate the male race” by denying them sex. At a certain point they convinced themselves that “reverse rape”— not being allowed to have sex with someone—should be included in the #MeToo conversation.

yeah.

(a typical post on /r/incel, a collection was to be found at /r/inceltears before this sub turned hateful too)

Not only is it one crybaby-fest of men scapegoating women for not giving any sex (newsflash: it’s mostly your ugly personality, not your ugly face), they reinforce each other in their hatred and victimhood. Vice made a great analysis on the indoctrination that was being spread in the 40.000 subscribers strong Incel community, and why ultimately a ban was probably for the best.

Toxic ideas don’t just remain ‘harmless’ online, they infect people’s minds and have consequences in the real world. Extreme examples are shooters like Elliot Rodgers, who killed 6 people, and identified himself as an incel in his manifesto. He was a frequent visitor of Subreddits like /r/redpill and /r/mensrights and is now being worshipped by other incels.

Letting dark communities exist has consequences. This isn’t just about incels or reddit, it’s about the spread of ideas that can be toxic. A subreddit like The_Donald grew as a joke, a bunch of trolls who thought it was funny to defend a racist misogynistic narcissist. They ended up becoming Trump’s online campaign front.

Even before Donald Trump got elected, I read too many stories of people saying they started in The_Donald for teh lulz and thought it was just funny, until they became so involved in the community that they started seriously campaigning for him.

(Donald Trump even gave an AMA, Reddit’s type of Q&A session to /r/the_Donald. Obama did an AMA as well in 2012, only on the official AMA subreddit)

I get it, in some weird way, people can get sucked into things. I’m part of a satiresubreddit /r/OnetrueGod, entirely devoted to Nicolas Cage, our lord and saviour.

It started as a joke, until I noticed I started getting really invested in it. I took a deep long look at myself after I tried to convince my friends to go stalk the hotel Nic Cage was staying in when someone had spotted the one true God in Brussels.

Jokes can get out of hand. and then suddenly they’re president of the “Free World”.

Don’t Feed The Trolls

Is there any argument for banning /r/The_Donald, which supports the legitimately elected President of the United States, except for the fact that they kind of organised their white-supremacy rally in it? If /r/Incels was banned, what makes /r/theredpill any less misogynistic? I’m still very cautious of the concept of censorship. There is the slippery slope argument that if we start here, what will be next? The argument is sometimes used as a fallacy, but we always need to think very deeply what censorship can lead to, especially considering the ban of an entire community.

Doing nothing has proven to have damaging effects though, since the internet can be an effective breeding grounds for radicalization. There have been plenty of actors that saw the immense opportunity in a network of networks, and have used it to further a political agenda. Young muslims who were first exposed to extremist ideas online were a goldmine for ISIS’ recruiting operations. The Mexican (then elect) president Peña Nieto also used recruited trolls who were already dicks on the internet in daily life to spread lies about his opponents and distraction on his own scandals (listen to the awesome Reply ALL podcast on this story)

The Russians have elevated the art of information operations, and use information warfare as a strategic tactic. The invasion in Crimea came with a whole disinformation campaign, and troll armies have been notoriously used to influence the Brexit vote, US elections and have been seen to also influence Czech and French elections.

A lot of these operations recruit confused individuals (usually teenagers) and weaponize their frustrations. But not all of the radicalization was steered from some motive, most of it just grew organically out of a certain hatred.

Where that hatred comes from is a whole other question that we won’t delve too deep into. What I usually read is the hatred comes from isolation, lack of community and lack of exposure to a diversity of perspectives.

“Banning an online community will just remove the container”

What should we do then? I’m still not sure banning or censorship is the solution to everything. I always thought people will just find the community or information somewhere else, and you’re basically ignoring the problem. I am however becoming more convinced that allowing a community to fester in its hatred out in the open is not healthy. Banning means the community gets pushed deeper into the web. While we lose oversight and transparency, the toxic ideas also become less accessible, are less normalized, and are harder to stumble upon. It reaches only the most extreme individuals in our society, instead of poisoning a whole generation.

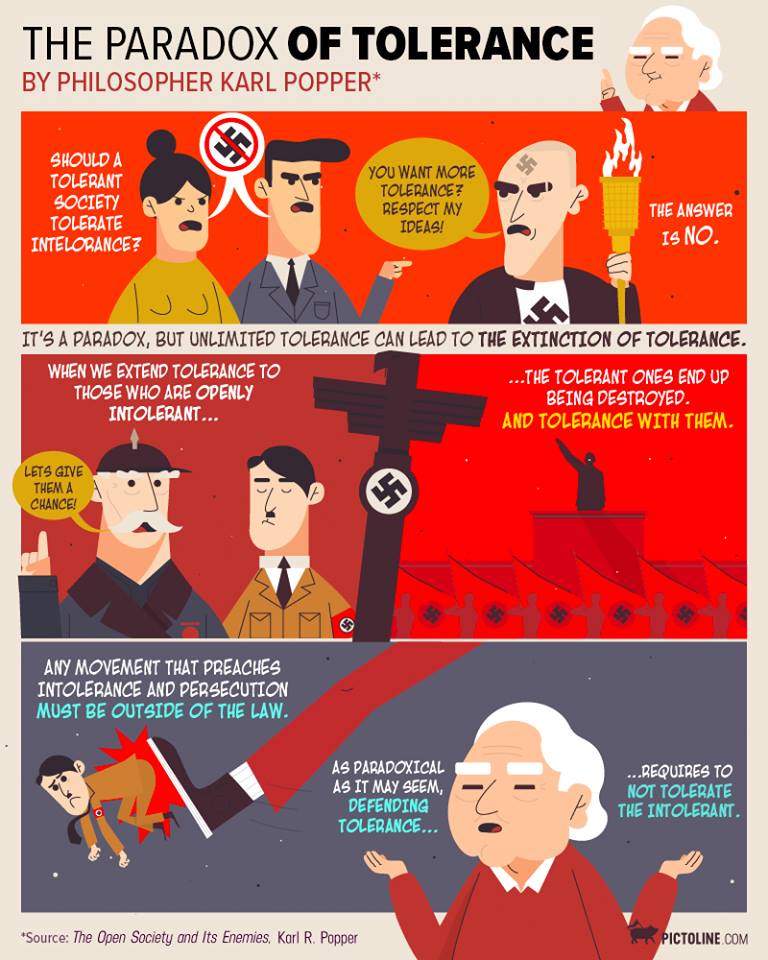

Where do we draw the line though? Are we prepared to give up part of our freedom and compromise on the idea of a free and open democratic society internet? As Karl Popper said in the paradox of tolerance, the only thing we should be intolerant towards, is intolerance itself.

Plato also said this in The Republic, Tyranny evolves from democracy, states stop being democratic when they’ve become too democratic.

Is banning communities the solution though?

Studies have shown that banning subreddits has an impact, and after the first ban of /r/fatpeoplehate and /r/hamplanet in 2015 a lot of the former members of the community slowed down on their hate.

Many didn’t though, which is why banning simply is not enough. Some people don’t just go away when their container of a community is removed, they just take it somewhere else. We need a counternarrative to challenge toxic ideas, and work against the isolation of the communities, so moral borders can be stressed by more outsiders.

We’re finally gaining ground on islamic extremism. ISIS seems to be failing to draw as many young recruits to Syria as they used to, and our European societies seem to take steps in reinforcing community and reintegrating radicalizing youths.

The Vilvoorde approach on dealing with youth that want to go fight with ISIS in Syria will always be my favourite example on how to tackle radicalization. Vilvoorde had the highest percentage of Syria-fighters in Europe. Since they implemented the approach, they’ve had almost none.

The set up is simple; if people spot a jihadi recruit, they ask the city for help to gather a roundtable of people who can influence them; family, friends, school, mosque. They try to reconnect the youth with the community, and challenge some of their misinterpretations of the Quran. It’s all based on local trust and love; they invite the youth, but do not force them, to become part of a broader community again.

We need to do this for every man and woman that we are losing to hatred. Be it hatred against women, fat people, people of a certain colour or religion, “Social Justice Warriors” but also hatred against white men, and definitely hatred against themselves. We need to learn to spot radical ideas and challenge them. it doesn’t matter if it’s “just online”, these people are as much human online as they are in the physical realm. We need to step up to offer a counternarrative. Make the borders clear, but also make them feel part of a broader community. Kill the “forever alone” trolls with kindness.

Community is important, but if it stays in self-reinforcing bubbles, community can be toxic. Banning microcommunities is a radical solution and a stop-gap measure to healing our society. Let’s hope we can also find a way for the bubbles to open up and diversify the communities people surround themselves with.

Let’s stop people like incels from thinking they’re justified in raping women because they have a right to sex. Let’s change those perspectives.

very insightful Nathalie

LikeLike

[…] 2,5 years after I wrote this post, I came back on a lot of the opinions expressed in this post. The world had changed and I had been too naive to notice. I realized the value and necessity of […]

LikeLike